- Welcome to Geeksww.com

MySQL open files limit: open-files-limit VS open_files_limit on Linux

Did you think that underscores and hyphens in MySQL server option names can be used interchangeably in configuration files? Read this tutorial to find out about an exception to this general rule.

We recently faced this issue where MySQL server showed different behavior while using open-files-limit vs open_files_limit in MySQL server configuration file and also the limit for this variable is not properly documented in MySQL manual.

If you look at MySQL documentation for open-files-limit, the documentation says that limit is 65535 but this is not true in case you are running your MySQL server on Linux. In this tutorial, I am going to try to explain the difference between open-files-limit and open_files_limit and also the upper limit for open files MySQL variable.

Lets start with the difference first

The general rule is: underscore '_' and hyphen '-' in option names can be used interchangeably in MySQL server configuration files. But, in case of open files limit there is a bug that can prevent you from setting higher values in case you used open_files_limit in your configuration file. This isn't entirely true actually, let me explain.

You can use open-files-limit and open_files_limit in configuration files to set an upper limit on open files but if you wish to set a limit higher than 65535 then you have to use open-files-limit instead of open_files_limit. This bug was recently fixed in newer MySQL versions. In a nutshell, both are permitted and valid syntax but if you want to set limit higher than 65535 files using open_files_limit (only works on Linux) then you have to use MySQL version >= 5.1.52.

Why is it important to set limits that high?

The open file limit becomes important for performance when MySQL server has to open several files at once. One particular case that immediately comes to mind is when you have thousands of tables in your MySQL server (in all schemas) and you are running a query that touches all of them e.g. counting no. of columns in all tables in all schemas etc or joing the COLUMNS and TABLES tables in information_schema. If your limit is set to a lower value then is required by MySQL to run the query then your query might fail causing other problems or might take too long to execute. Please refer to another similar tutorial here for more info on table_cache configuration variable.

Note: Please refer to MySQL bug 58121 for more info.

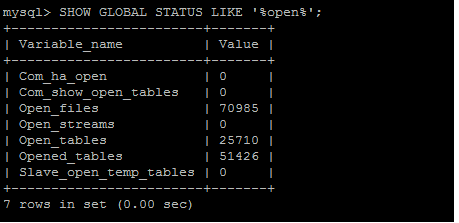

Here is a proof that MySQL can open more files than 65K even in version 5.0.67 but only if you use open-files-limit in your MySQL server's configuration file or use MySQL server version >= 5.1.52 (tested on 5.1.52) and also you must be using Linux to run MySQL server.

Did this tutorial help a little? How about buy me a cup of coffee?

Please feel free to use the comments form below if you have any questions or need more explanation on anything. I do not guarantee a response.

IMPORTANT: You must thoroughy test any instructions on a production-like test environment first before trying anything on production systems. And, make sure it is tested for security, privacy, and safety. See our terms here.

tags cloud

tags cloud

popular searches

popular searches

free download for mysql database server 5.1.5, bison, gearman, source code, php, install cairo, java, laptop, install mysql, linux, mysql, mysql mysql, mysql initialization, tools, ubuntu

Similar Tutorials:

- How to check MySQL version number?

- MySQL query to find all views in a database

- Download, configure, compile, and install MySQL 5.5 from source code on Linux

- How to find MySQL server uptime?

- How to set innodb_flush_log_at_timeout in MySQL 5.6?

Tutorials in 'Database Management Systems > MySQL' (more):

- What should be monitored on a busy Database/MysQL server?

- How to speed up a Mysql replica?

- The server requested authentication method unknown to the client [phpmyadmin]

- How to set default values for MySQL JSON columns

- How to delete rows in table when PK is referenced in another table

Comments (write a comment):

See http://bugs.mysql.com/40368, the issue has less to do with the 64k and everything with a parsing bug in mysqld_safe Posted by: Arjen L on Dec 01, 2010

I can actually think of at least one reason NOT TO set your maxes too high (even in just the general sense, not only # of tables): Posted by: Scriptster on Dec 10, 2010

So, anyways, back to the point:

If the amount of RAM you've allocated becomes higher than amount of physical RAM, the server will start jiggling data back and forth from the swap instead and it's VERY slow. Posted by: Scriptster on Dec 10, 2010

Lastly: regarding lack of RAM,

You would think that the tables are preloaded because you've allowed to keep a bunch of them open but they are essentially still on HDD and are still subject to the same disk bottleneck. Posted by: Scriptster on Dec 10, 2010

Is Delay_key_write impact ope_limit_files situation such as /usr/sbin/mysqld: Can't open file: '.tablename.frm' (errno: 24)?

Set Delay_key_write off...was ON

Pls let me know Posted by: sonny on Jul 25, 2011

interesting, well I haven't come across a situation like that but this might be possible since delay_key_write=OFF will force MySQL to modify the index files too and since MyISAM has separate index files so it'd try to open more index files to update them (although index file descriptor is shared between all sessions). Also, if you look at the meaning of error no. 24 you will know that it is a no. of open files issue.

I'd recommend you to set the limit higher and try again. Was that the only change you made to the system config?

Thanks. Posted by: Shahryar G on Jul 30, 2011

sir, i have mysqldatabase have 55 lak record in linux platform. i have 150 users. whiel connect 130 users with database through our application, saving record time tis taking 2,3 sec. when connect behind 130 users saving time is taking more than 30 sec. how to reduce response time from 30sec to 2 sec. we have 8GB RAM, xeon processor quad core, our database size is nearly 12 to 20 gb. please tell me how to solve it. Posted by: J.Anuraja on Nov 21, 2011

@J.Anuraja: there can be several optimizations you can make e.g. increasing you innodb_buffer_pool_size (if there is room), adding indexes (depending upon the query itself), optimize OS so it does not do swapping, turn change buffering ON for updates (works in 5.5).

Please copy and paste the table definition and update query. also, try using the forum for such posts.

Thanks. Posted by: shahryar g on Dec 12, 2011

Hello.

I'm having a problem with the "open_files_limit" which is not being updated after reboot.

Currently i have it defined like this:

[mysqld]

open-files-limit=20480

I currently have mySql 5.6.

Anyone having this problem ? Posted by: Simon on May 15, 2014

Simon,

Did you update the nofile value for the mysql user in etc/security/limits.conf ?

If yes, are you using systemd ? If yes you have to add LimitNOFILE to the service file.

Hope will help you ! Posted by: goldyfruit on Jan 14, 2015